Midnight Nuclear Bomb: OpenAI Open-Sources GPT! Runs on Tablets and Phones, Free for Everyone

👉 Project Official Website: https://www.python-office.com/ 👈

👉 Communication Group for This Open Source Project 👈

Hey everyone, this is 程序员晚枫, currently all in on AI Programming Practice. There have been so many open-source announcements lately!

- Open-Sourcing Qwen3-Coder Is a Top-Tier AI Power Move — Alibaba's Ambitions Revealed

- 39,900 RMB Humanoid Robot Arrives — Unitree Also Open-Sourced Its Core Technology

Even the usually mysterious OpenAI couldn't resist — they open-sourced 2 models yesterday at 3 AM:

GPT-oss-120bandGPT-oss-20b, free for commercial use, and they run on tablets and phones!

Open-source URL: https://github.com/openai/gpt-oss

Two Models Released at 3 AM

At 3 AM, the AI community was blown up again by OpenAI.

Sam Altman himself tweeted: "This is our first truly open-source large model since GPT-2."

One sentence, and every developer's sleepiness vanished.

This time OpenAI dropped two models at once:

• GPT-oss-120b: 120 billion parameters, desktop-class "full-power" reasoning beast

• GPT-oss-20b: 20 billion parameters, lightweight version that can run on phones

Two keywords: Open Weights + Free to Use.

OpenAI has re-embraced open source, saying that one reason for releasing an open-source system is that some companies and individuals prefer to run such technology on their own computer hardware.

That means from today, you can take the 120B model home, run it offline, use it commercially, without paying a single cent.

What's worth noting is that both open-sourced models use the Apache 2.0 open-source license, the same as the Coze open-source release — has Apache 2.0 become the default standard in building commercial empires through open source?

Extreme Performance

Less than a day after release, GitHub stars already exceeded 6,900.

For comparison, our WeChat QR Code has been out for over 3 years and only has 1,100 stars. Truly envious.

Why is it so popular?

1. Performance on Par with o4-mini

When I saw this feature, I was very skeptical — but since OpenAI's founder is strongly recommending it, I'm eager to try it.

Altman's exact words: "Real-world performance comparable to o4-mini."

If you've been amazed by o4-mini's reasoning before, now you can recreate it locally.

2. True Local Privatization

Download the model and use it — no internet, no API Key, no privacy leak worries.

Finance, healthcare, government — all sensitive scenarios can take off directly.

3. First Open-Source Return After Seven Years

The last time OpenAI open-sourced anything was GPT-2 in 2018. Seven years later, they pull out the big guns: 120B directly.

Industry insiders joked: "OpenAI finally picked up the 'Open' again."

Were there people like me who, when they first heard "OpenAI," assumed all their products were open-source by default?

Official Code Demo

How easy is it to get started?

The official examples are already out:

Code source: https://github.com/openai/gpt-oss

from transformers import pipeline

import torch

model_id = "openai/gpt-oss-120b"

pipe = pipeline(

"text-generation",

model=model_id,

torch_dtype="auto",

device_map="auto",

)

messages = [

{"role": "user", "content": "Please introduce programmer Wanfeng and his open-source projects"},

]

outputs = pipe(

messages,

max_new_tokens=256,

)

print(outputs[0]["generated_text"][-1])

An RTX 4090 can run 120b's 8-bit quantization; a Snapdragon 8 Gen3 phone can run 20b at 4-bit.

Community Reaction and Industry Impact

The community is exploding:

• GitHub Stars hit 20k in 5 hours

• Hugging Face download chart directly topped

• First Issue: "Please add Chinese SFT!"

It's foreseeable that in the next week, fine-tuning tutorials, LoRA, and Chinese models will sprout like bamboo shoots after rain.

What does this mean for the industry?

- Private deployment cost drops from millions of dollars to a single graphics card.

- SaaS vendors' moats are "free local"ed — must compete on service, compete on scenarios.

- New opportunities for entrepreneurs: vertical models + local deployment, making "small but beautiful" industry GPTs.

Closing Thoughts

The last time GPT-2 was open-sourced, it gave birth to Hugging Face and the entire large model ecosystem.

Today, GPT-oss-120b takes the barrier down to the floor again.

Everyone can stand on the shoulders of 120B and rewrite their own industry.

The wheel of history has started rolling — this time, you're not just an audience member.

— END —

Like, comment, share — tell me in the comments what you're planning to do with GPT-oss?

Reference Articles

- Comparison images and open-source approach: https://baijiahao.baidu.com/s?id=1839683944144556846&wfr=spider&for=pc

Also, please go like Xiao Ming's Xiaohongshu account below! I'm tired of working hard and want to be a kept man.

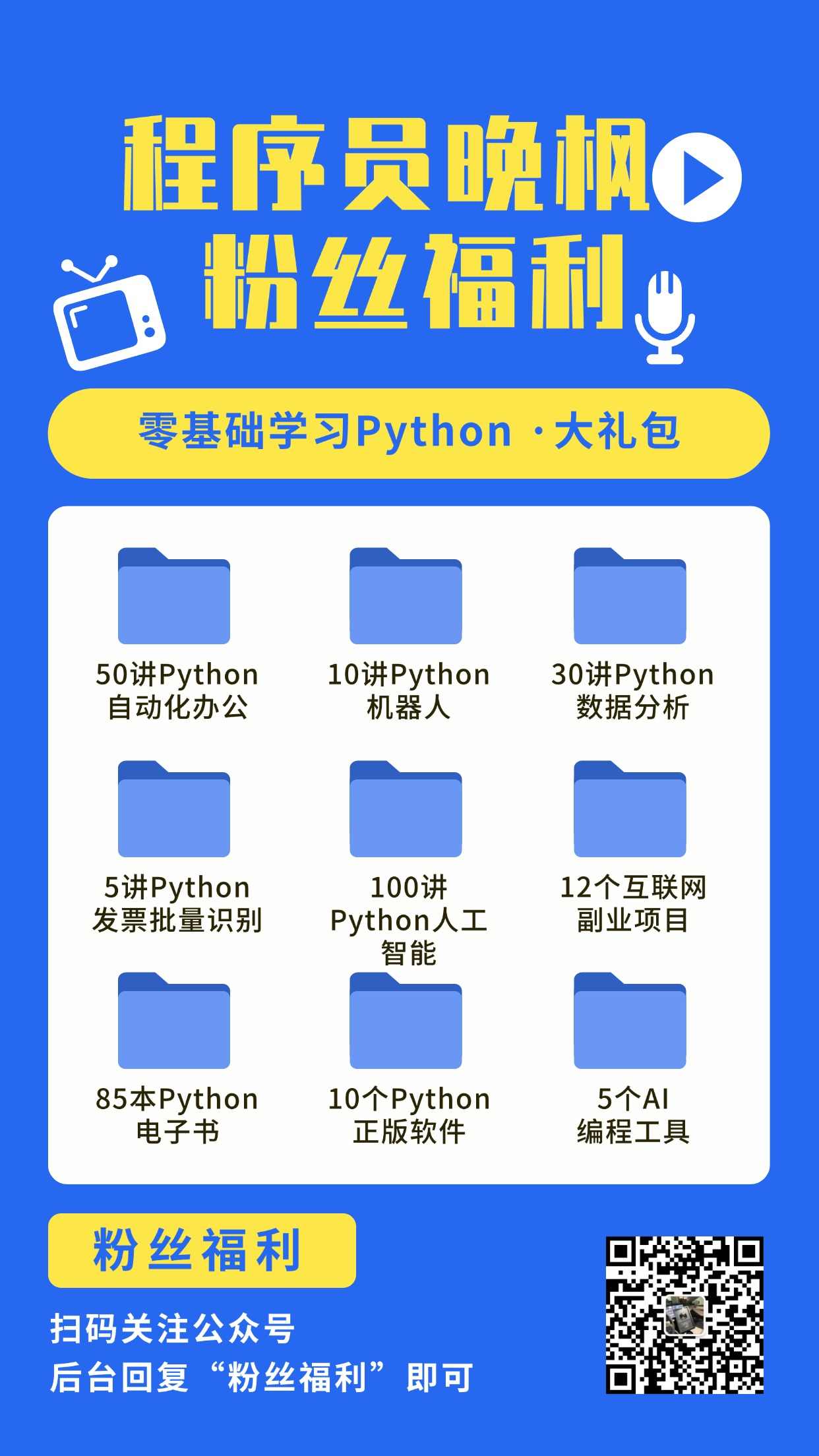

程序员晚枫专注AI编程培训,小白看完他和图灵社区合作的教程《30讲 · AI编程训练营》就能上手做AI项目。

🎓 AI 编程实战课程

想系统学习 AI 编程?程序员晚枫的 AI 编程实战课 帮你从零上手!

- 👉 课程报名:点击这里报名,前3讲免费试听

- 👉 免费试看:B站免费试看前3讲,先看看适不适合自己