DeepSeek V3.1 Launched Late at Night! Is It Any Good This Time?

👉 Project Official Website: https://www.python-office.com/ 👈

👉 Communication Group for This Open Source Project 👈

The speed of AI iteration is already so fast that even complaints can't keep up.

On the evening of August 19, DeepSeek quietly announced in its official group that the online model version has been upgraded to V3.1. There was no pre-heat poster, no press conference, not even a Weibo notification, just an understated "context window extended to 128k".

This is already DeepSeek's third major update this year. It's only five months since the release of the V3-0324 version on March 25, and only three months since the minor version upgrade of the R1 model on May 28.

01 Speed Mania

How intense is the competition in the AI field? A company releases three major updates within five months[@citation:2]. DeepSeek's iteration speed is reminiscent of the arms race in the smartphone era, just several times faster.

Just recently, Claude just released the Opus4.1 version, with programming capabilities improved by 74.5%. Google DeepMind is not to be outdone, launching the Genie 3 world model that can generate interactive 3D environments in real time.

Under such competitive pressure, DeepSeek seems to have chosen a strategy of using speed to overcome slowness[@citation:4].

02 How Good Is V3.1?

According to official statements, the most significant improvement in V3.1 is the context window expansion from 64k to 128k. Converted to Chinese characters, this is equivalent to being able to process ultra-long texts of 100,000 to 130,000 words, enough to swallow an entire novel like Rickshaw Boy or To Live.

Performance on multi-step reasoning tasks has improved by 43% compared to the previous generation, with higher accuracy in complex tasks such as mathematical calculation, code generation, and scientific analysis. At the same time, the occurrence of "hallucinations" (generating false information) by the model has decreased by 38%, significantly enhancing output reliability.

In terms of multilingual support, V3.1 can handle more than 100 languages, especially improving its ability to process Asian languages and minority languages.

03 User Experience Improvements

For ordinary users, what does a 128k context window mean?

Long document analysis becomes easy. No more cutting long texts into pieces to feed to AI, you can directly throw entire books or entire project documents to DeepSeek V3.1 for processing.

Programmers should be happy — the model can now better understand large code bases and perform more accurate code reviews and suggestions. Front-end code capabilities have also been recognized by some users.

The coherence of multi-turn conversations has also improved. AI can remember longer conversation histories, reducing the awkward situation of "goldfish memory".

04 When Will R2 Come?

Although V3.1 brings many improvements, what users are obviously looking forward to more is the next-generation large model DeepSeek-R2.

Previously, market rumors said R2 would be released between August 15 and 30, but sources close to DeepSeek said the news was untrue, and there is currently no specific release plan from the official side.

Foreign media reports say that DeepSeek R2 encountered serious errors during training due to chip issues, which may be one of the reasons for the delayed release. CEO Liang Wenfeng's perfectionist tendencies are also considered an influencing factor.

05 Open Source Commitment

DeepSeek reaffirmed its long-term commitment to the open source community, stating that it will continue to follow its open source release strategy and provide technical support to the global AI research community and developers.

DeepSeek has done a lot of work in open source, covering models, high-performance computing libraries, file systems and other aspects. Below I have compiled its main open source project addresses, hoping to help you quickly find the resources you need.

| Category | Project Name | GitHub Address |

|---|---|---|

| Large Models | DeepSeek-V3-0324 | https://github.com/deepseek-ai/DeepSeek-V3-0324 |

| DeepSeek-R1-0528 | https://github.com/deepseek-ai/DeepSeek-R1-0528 | |

| Infrastructure | 3FS | https://github.com/deepseek-ai/3FS |

| Smallpond | https://github.com/deepseek-ai/smallpond | |

| High Performance Computing | DeepEP | https://github.com/deepseek-ai/DeepEP |

| DeepGEMM | https://github.com/deepseek-ai/DeepGEMM | |

| FlashMLA | https://github.com/deepseek-ai/FlashMLA | |

| Training Optimization | DualPipe | https://github.com/deepseek-ai/DualPipe |

| EPLB | https://github.com/deepseek-ai/eplb | |

| Profile-Data | https://github.com/deepseek-ai/profile-data | |

| Index & Collaboration | open-infra-index | https://github.com/deepseek-ai/open-infra-index |

As of press time, Hugging Face has not yet provided model weight files for the V3.1 version for download. But based on DeepSeek's previous practices, the open source version will likely be released soon.

06 International Competition Landscape

DeepSeek's speed and popularity have already posed a challenge to American incumbents like OpenAI. It demonstrates how Chinese companies can make significant progress in the field of artificial intelligence at seemingly a fraction of the cost.

Against the backdrop of increasingly fierce global AI technology competition, DeepSeek's rapid product iteration strategy fully demonstrates its technological innovation capabilities and market response speed.

Despite facing restrictions on accessing high-end computing resources due to international sanctions, DeepSeek still maintains a strong competitive advantage in the field of open source large language models through innovative and efficient training methods and optimization strategies.

The API interface call method remains unchanged, and developers can seamlessly switch without additional adjustments. The version number is just a change in the digit after the decimal point.

But the improvements in user experience are real: longer context window, more accurate reasoning, fewer hallucinations. Technological iteration no longer pursues disruptive changes in large version documents.

Perhaps we are entering a new stage of AI development: from pursuing explosive growth in parameters, to refined, practical experience optimization.

Also, everyone please go give Xiaoming's Xiaohongshu account👇 a like~! I don't want to work hard anymore, I want to eat soft rice.

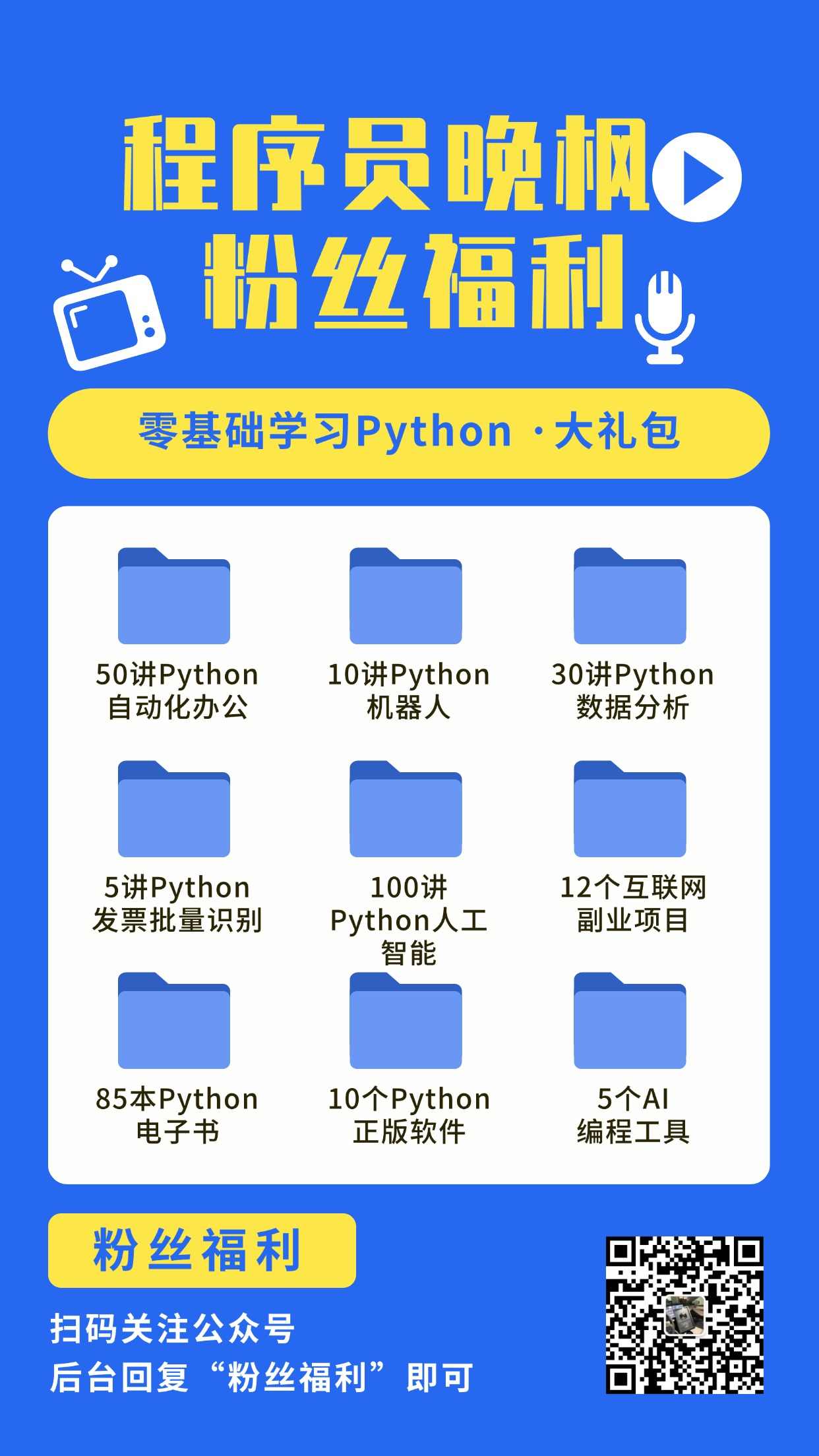

Programmer Wanfeng focuses on AI programming training. Beginners can start doing AI projects after watching the tutorial "30 Lectures · AI Programming Training Camp" that he collaborated on with Turing Community.

🎓 AI 编程实战课程

想系统学习 AI 编程?程序员晚枫的 AI 编程实战课 帮你从零上手!

- 👉 课程报名:点击这里报名,前3讲免费试听

- 👉 免费试看:B站免费试看前3讲,先看看适不适合自己